Abstract

The aim of human-object interaction (HOI) detection is to identify the triplets consisting of a human, a verb, and an object. Although existing methods leverage vision-language models (e.g., CLIP) to transfer textual information for unseen compositions, they often fail to capture the fine-grained visual cues that are essential for complex interactions, such as spatial configurations and object affordances. In this paper, we introduce visual guidance as an alternative approach to achieving the desired outcome. We define a new visual-guided HOI detection task for the first time, aiming at detecting unseen HOI categories using a small number of guidance examples. To support this new task, we have constructed a new benchmark dataset, which contains one base set and four novel sets, taking into account the peculiarities of HOI. Then, we propose a VG-HOI model with progressive guidance, query reconstruction, and a conditional uncoupling decoder to supplement common HOI knowledge and task-specific cues to improve the generalization capability of our model. Besides, we explore a new guidance sampling strategy — disentangled guidance — for real-world scenarios. Our in-depth analysis of the experimental results shows that the proposed model can improve the ability to generalize when detecting visual-guided HOI.

Similar content being viewed by others

1 Introduction

Human-object interaction (HOI) detection aims at localizing human-object pairs and recognizing their interactions, i.e., detecting the <human, verb, object> triplets in an image. It shows great potential in a range of downstream tasks, such as activity recognition, visual question answering, assistive robots, and visual surveillance.

Classical HOI detection methods [1–4] often adopted a two-stage pipeline to detect HOI triplets, i.e., first using an off-the-shelf detector to detect all instances in an image and then classifying the interaction between each pair. However, they might suffer from heavy computation costs when dealing with numerous human-object pairs. In contrast, one-stage methods [5] directly detected HOI triplets by defining interaction proposals. Later, inspired by the DETR approach [6], many researchers formulated the HOI detection task as a set prediction problem and designed various end-to-end transformer-based models [7–15].

Although the aforementioned approaches have demonstrated notable performance, most of them require a large number of annotated samples for training, which might struggle with long-tailed distribution and lack generalization to unseen categories. To tackle the above challenges, some work [16–19] introduced pre-trained vision-language foundation models (e.g., CLIP [20]) to HOI detectors and achieved promising results. However, the CLIP-based methods might encounter difficulties when some novel categories are hard to describe in text or are not covered in the pre-trained vision-language model. Another possible way to enable HOI detectors to generalize was to fine-tune models with a few guidance samples. However, this scheme introduced additional computational cost and test time, causing inefficiency problems and lacking the ability for incremental learning.

This raises the following question: if we want to bypass the inefficiency issue without resorting to pre-trained vision-language models, how can HOI models discover new concepts purely from a visual perspective?

Recently, some general models (e.g., SegGPT [21] and Painter [22]) have attempted to enhance their generalization capabilities for segmentation tasks by providing visual guidance. In these methods, a few annotated support images were used as prompts to indicate what should be segmented, providing a new way of offering guidance on new concepts. Drawing inspiration from this, in this paper, we propose to define a visual-guided HOI detection task for the first time, aiming at directly detecting HOIs of unseen categories, using only a few annotated examples for visual guidance.

To facilitate model training and evaluation for this new task, we constructed a visual-guided HOI detection benchmark dataset based on HICO-DET [2]. Considering the peculiarity of HOI detection, i.e., the verb and object categories determine what the final HOI category is, we proposed to split all HOI categories into one base set for training and four novel sets for testing: (1) unseen HOI compositions but seen objects and seen verbs, (2) unseen objects but seen verbs, (3) unseen verbs but seen objects, and (4) unseen objects and unseen verbs. We conducted a comprehensive evaluation of this new task.

To support our new proposed task, based on the DETR [6] architecture, we propose a visual-guided human-object interaction (VG-HOI) detection model to explore and leverage crucial cues in visual guidance for HOI detection. Specifically, we first obtain human, object, and union prototypes from support images for injecting guidance knowledge for the query image. As these prototypes primarily embed adaptive HOI category information from the support images, we propose using learnable common prototypes to reconstruct them, hence supplementing common HOI knowledge acquired from the base set. Next, we progressively reconstruct the query feature with the prototypes to make them aware of which HOI categories to detect. In the decoder, different from a straightforward DETR decoding scheme, we propose a conditional uncoupling decoder. On the one hand, we separately use three decoders for human, object, and interaction to better understand the nature of HOI. On the other hand, we propose leveraging support prototypes as prompts to incorporate category-specific cues into detection queries, thus enabling them to adapt quickly to unseen categories. Experimental results show that our proposed designs can effectively enhance VG-HOI performance.

Another important setup for visual-guided HOI detection is the guidance sampler. A straightforward way is to provide support images that contain the same HOI triplet as in the query image, which we call composition guidance. Nevertheless, humans naturally have the ability to learn HOI relations in a disentangled way and do not necessarily require exactly the same HOI support samples. To mimic this ability, we consider a disentangled guidance sampling strategy, which samples two semi-positive support images: one provides information about the object, and the other about the verb. We find that training with disentangled guidance improves generalization ability more than training with composition guidance, making it more beneficial for real-world applications.

Our main contributions can be summarized as follows.

-

1)

To the best of our knowledge, we are the first to introduce the visual-guided HOI detection task, aiming to detect HOI of unseen categories using only a few visual guidance samples.

-

2)

To support our proposed new task, we construct a new visual-guided HOI detection benchmark dataset and consider four novel sets for comprehensive evaluation.

-

3)

We propose a novel VG-HOI model to mine and utilize guidance knowledge for visual-guided HOI detection. It includes a progressive guidance and query reconstruction module and a conditional uncoupling decoder for improving the model’s generalization ability.

-

4)

To mimic the disentangled learning capability of humans, we introduce a new and more practical support sampling strategy, i.e., disentangled guidance. Experimental results have demonstrated the benefits of the strategy in developing stronger generalization abilities.

2 Related work

2.1 Generic HOI detection

Generic HOI detection methods can be classified into two categories, i.e., two-stage methods and one-stage methods. Two-stage methods [1–3] often resorted to an off-the-shelf detector (e.g., Faster R-CNN [23]) to detect all humans and objects first and then associate them one by one to obtain human-object proposal pairs, which were then used to perform interaction classification to generate the final results. Such a sequential scheme was subject to distraction from many negative pairs when determining which pairs were interactive based on local features. Besides, it incurred significant computational costs when processing numerous potential human-object combinations. To break through the limitations, one-stage methods were developed to detect HOI triplets directly from an image without using pre-trained object detectors. Some work [5] proposed to define interaction proposals using human priors in advance. Nevertheless, such heuristically-defined interactions might be inadaptive or lose validity under complex scenes.

Inspired by the DETR approach [6], many researchers tend to formulate the HOI detection task as a set prediction problem, thus arising a new trend of one-stage methods based on transformer architectures. Some researchers [7, 8] directly modified object queries in DETR [6] as HOI queries and leveraged a single decoder to predict final HOI triplets. Others [9, 10] designed two types of queries and adopted different decoders to obtain human/object instances and interactions, respectively. These were then matched to generate final HOI results. Refs. [24, 25] developed human-object pair queries to predict a set of interactive human-object pairs first, and they then predicted the results of each interaction without the need for matching strategies. Since then, many researchers have devoted themselves to boosting the performance of transformer-based models by refining the initialized queries [26–28], employing the knowledge distillation strategy [29], leveraging pre-trained visual-linguistic models [30], and exploring multi-scale features [31]. Although the above methods have achieved promising results, they rely on a large number of annotated samples for training, which are laborious to acquire. Without abundant training samples, they easily failed and could not handle unseen categories. Thus, we propose visual-guided HOI detection, which can detect unseen HOI with only a few guidance samples.

2.2 CLIP-based HOI detection

To improve the generalization ability of HOI detectors on novel categories, some work [30, 32] started to train a transferable end-to-end HOI detector based on a pre-trained vision-language model, i.e., CLIP [20]. Specifically, THID [32] distilled and leveraged the transferable knowledge from the pre-trained CLIP model to train its model. The GEN-VLKT method [30] tried to use the text embedding in CLIP to initialize the weights of classifiers and perform feature-level knowledge distillation from pre-trained CLIP features. Next, HOICLIP [33] pointed out the issues of knowledge distillation and thus turned to directly retrieving useful cues from CLIP. SICHOI [34] replaced simple text prompts with a syntactic interaction bank (capturing spatial relationships, action-oriented postures, and situational conditions) to eliminate ambiguity in VLM-based HOI detection. ContextHOI [18] pioneered a dual-branch framework with spatially contrastive constraints to decouple instance and context features for robust human-object interaction detection, especially in occluded scenarios. BC-HOI [19] introduced a bilateral collaboration framework that guides VLMs to generate fine-grained instance-level features via attention bias guidance and enhances HOI detector supervision with LLM-based token-level guidance. Besides, Refs. [35, 36] adopted a two-stage framework focusing on leveraging CLIP priors to improve interaction predictions. CLIP4HOI [37] decoupled instance localization from interaction recognition to overcome positional distribution bias. BCOM [38] pioneered bilateral adaptation that synergizes CLIP’s semantic knowledge with detector-derived spatial features via lightweight adapters.

Another line of research was to perform the pre-training process. For instance, Refs. [39, 40] proposed a relational language-image pre-training strategy. Ref. [41] formulated HOI learning as a sequence generation task and then constructed pre-training data and different proxy tasks for pre-training. Instead of leveraging pre-trained models or strategies, this paper explores the use of visual guidance to enhance the model’s ability to generalize to unseen categories.

2.3 Visual-guided perception

Some researchers focus on introducing visual guidance to enhance the generalization ability of visual perception. For example, one group of studies [42–48] used reference images to guide the detection or segmentation of target images. After training, these models could detect or segment unseen classes with low-shot visual guidance. Another group of studies [21, 22, 49–51] proposed more versatile and practical visual-guided models, which explored various types of visual guidance, such as points, scribbles, and masks. Building on previous research, we propose introducing visual guidance into HOI detection, an area that has not been explored in prior studies.

3 Proposed setup

3.1 Problem definition

Given a query image, we sample a support set for providing visual guidance. The support set consists of C HOI categories and one sample for each category with annotations. The goal of the task is to find the C HOI categories in the query image under the guidance of the support set and to predict the bounding boxes of related humans and objects. The final target is to train an HOI detector on a base set with seen categories and test the trained detector on novel sets with unseen categories.

3.2 New visual-guided HOI detection benchmark

Traditional visual-guided tasks (e.g., object detection [42] and semantic segmentation [52–54]) only consider the object category instead of the triplet composition in HOI. Thus, they directly split seen objects and unseen objects into the base set and novel set, respectively.

In the HOI field, some HOI recognition methods [55, 56] disjoint seen and unseen objects to ensure that all HOI categories are divided into a base set and a novel set. They only consider whether an HOI composition and its object were seen during meta-training.

Due to the peculiarity of HOI detection, where an HOI composition category is determined by both verb and object categories, some HOI detection methods [30, 57–59] consider whether an HOI composition, a verb, and an object were seen individually. They constructed three test sets, respectively, i.e., unseen composition, unseen verb, and unseen object. However, such setups imply that the case where both the object and the verb are unseen was not considered, thus limiting their practical applications.

In this paper, we take a step forward by thoroughly focusing on whether each category type (i.e., HOI composition, verb, and object) is seen. Based on HICO-DET [2], we construct a visual-guided HOI detection benchmark dataset. Our dataset contains a base and four novel sets. The base set consists of seen objects and seen verbs, while the four novel sets focus on unseen composition (UC), unseen object (UO), unseen verb (UV), and unseen object and verb (UOUV), respectively. It is worth mentioning that our UC, UO, and UV sets are different from those in Refs. [30, 57–59]. For example, for the UO set, we specially eliminate the effect of verbs and define it as unseen objects but seen verbs, while the cases where both objects and verbs are unseen are classified into the UOUV set, which was never explicitly considered before. Other sets are constructed similarly, where the detailed descriptions are shown in Table 1.

To simplify the entire training and testing setup, we find an optimal base-novel split with a balanced number of categories for each base or novel set, based on which we can simply train one model on the base set and directly test it on the four novel sets. This also makes comparisons and analyses of all sets more fair and possible. On the contrary, the three-set setup and split used in Refs. [30, 57–59] require training three models on three base sets and evaluating each novel set individually.

4 Proposed method

To deeply mine and utilize the crucial knowledge in visual guidance, we propose a visual-guided human-object interaction detection (VG-HOI) model for visual-guided HOI detection. The overall architecture is illustrated in Fig. 1. Given a query image and support images with instance annotations, we first use a shared backbone to extract their feature maps. Then, we divide the support guidance to extract human, object, and union prototypes. Next, we propose a visual-guided feature reconstruction module to reconstruct query features under the guidance of the prototypes. Finally, we propose a conditional uncoupling decoder to perform instance and interaction predictions.

Overall architecture of our proposed visual-guided human-object interaction detection (VG-HOI) model. F is the feature of the query image. H, O, and U are the human, object, and union prototypes extracted from support images, respectively. \(\boldsymbol{F}_{\mathrm{h}}\), \(\boldsymbol{F}_{\mathrm{o}}\), and \(\boldsymbol{F}_{\mathrm{u}}\) are the query features guided by human, object, and union prototypes, respectively. \(\boldsymbol{T}_{\mathrm{h}}\), \(\boldsymbol{T}_{\mathrm{o}}\), and \(\boldsymbol{T}_{\mathrm{u}}\) are the encoded results of the corresponding guided query features. \(\boldsymbol{Q}_{\mathrm{h}}\), \(\boldsymbol{Q}_{\mathrm{o}}\), and \(\boldsymbol{Q}_{\mathrm{v}}\) are the initial human, object, and interaction query tokens, respectively. \(\boldsymbol{P}_{\mathrm{h}}\), \(\boldsymbol{P}_{\mathrm{o}}\), and \(\boldsymbol{P}_{\mathrm{u}}\) are the human, object, and union prototype prompts, respectively. \(\boldsymbol{Q}_{\mathrm{h}}'\), \(\boldsymbol{Q}_{\mathrm{o}}'\), and \(\boldsymbol{Q}_{\mathrm{v}}'\) are the updated human, object, and interaction query tokens after decoding. \(s^{\mathrm{o}}_{i}\) and \(b^{\mathrm{o}}_{i}\) are category score and bounding box of the i-th object, respectively. \(b^{\mathrm{h}}_{i}\) is the bounding box of the i-th human. \(s^{\mathrm{v}}_{i}\) is the verb score of the i-th interaction. Given the query feature and support features extracted by a shared backbone, we first extract human, object, and union prototypes for providing guidance information. Next, we propose a VGQR module to reconstruct the query feature map based on the prototypes by incorporating common HOI knowledge. Finally, we propose a conditional uncoupling decoder, which consists of three decoders to better understand the nature of HOI. This decoder involves visual guidance to provide task-specific cues

4.1 Backbone

Given a query image and C support images, we use a shared convolutional neural network (CNN) to extract their features. These features are then flattened to generate sequenced query features \(\boldsymbol{F}\in{\mathbb{R}^{HW\times d}}\), where HW denotes the spatial size of query feature, and d denotes channel number, and support features \(\boldsymbol{S}_{\mathrm{supp}} \in{\mathbb{R}^{C \times H'W'\times d}}\), where \(H'W'\) denotes the spatial size of support feature.

4.2 Visual guidance extraction

Since HOI triplets include humans, objects, and their interactions, we propose to divide the support guidance, i.e., using human, object, and union bounding boxes to generate three types of prototypes.

Specifically, we first obtain the three types of prototypes using ROIAlign [60] with each corresponding ground truth bounding box to extract the corresponding region in the support feature \(\boldsymbol{S}_{\mathrm{supp}}\). We then use average pooling to produce the final prototype. In other words, we use human, object, and union bounding boxes to generate the human, object, and union prototypes as \(\boldsymbol{H},\boldsymbol{O},\boldsymbol{U}\in{\mathbb{R}^{C\times d}}\), where H, O, and U represent human, object, and verb information, respectively. The whole process can be formulated as follows:

where \(B_{\mathrm{h}}\), \(B_{\mathrm{o}}\), and \(B_{\mathrm{u}}\) represent the bounding boxes of human, object, and union prototypes, respectively.

4.3 Visual-guided query reconstruction

After obtaining the three types of support prototypes, we use them to reconstruct the query feature map for providing guidance information.

Query feature reconstruction

We start by using the original prototypes to reconstruct the query feature map with the attention mechanism, as suggested by Ref. [61]. The human-guided query feature \(\boldsymbol{F}_{\mathrm{h}}\) is used here as an example.

Initially, we use a shared linear layer to embed both the prototype and the query feature into the same feature space. We then compute a matching coefficient \(\boldsymbol{A}\in{\mathbb{R}^{HW\times C}}\) between them, as expressed in Eq. (2):

where \(\boldsymbol{W}_{\mathrm{h}}\) represents linear projection. Subsequently, we apply A to the original prototypes H to acquire a reconstructed query feature map \(\boldsymbol{F}_{\mathrm{h}}'\) via Eq. (3):

Similarly, using the object and union prototypes, we can obtain \(\boldsymbol{F}_{\mathrm{o}}'\) and \(\boldsymbol{F}_{\mathrm{u}}'\), respectively.

Progressive guidance and query reconstruction

In Eq. (3), the original prototypes can only provide unseen features when reconstructing the query feature map. We argue that supplementing common HOI knowledge to unseen prototypes can benefit the detection process. For example, human features can be generally represented by several common body-part features. Objects with interaction also share a close spatial relationship with humans. Such common HOI knowledge is shared between the seen and unseen categories and can therefore be effectively learned from the latter.

To this end, we propose to first reconstruct the prototypes by introducing a group of learnable common prototypes for encoding common HOI knowledge, and then use the reconstructed prototypes to obtain a reconstructed query feature, as shown in Fig. 2.

Details of visual-guided query reconstruction. S is the learnable common prototype (encoding shared HOI knowledge). \(\boldsymbol{H}_{\mathrm{r}}\) is the reconstructed human prototype (fused with common knowledge from S). \(\boldsymbol{F}_{\mathrm{h}}'\) is the query feature reconstructed using the original H. \(\boldsymbol{F}_{\mathrm{r}}\) is the query feature reconstructed using \(\boldsymbol{H}_{\mathrm{r}}\). Q, K, V represent the query, key, and value matrices in attention operations, respectively. First, H (from support set) is combined with S via attention operations to generate \(\boldsymbol{H}_{\mathrm{r}}\). Then, the query feature F is reconstructed twice: first, using H to calculate \(\boldsymbol{F}_{\mathrm{h}}'\), and second, using \(\boldsymbol{H}_{\mathrm{r}}\) to calculate \(\boldsymbol{F}_{\mathrm{r}}\). Finally, \(\boldsymbol{F}_{\mathrm{h}}'\) and \(\boldsymbol{F}_{\mathrm{r}}\) are fused, followed by an “Add & Norm” operation to produce the refined \(\boldsymbol{F}_{\mathrm{h}}\). The “Add & Norm” operation represents a residual connection followed by normalization applied after the feed-forward network (FFN)

Specifically, we randomly initialize learnable common prototypes \(\boldsymbol{P}\in{\mathbb{R}^{N_{\mathrm{s}}\times d}}\), where \(N_{\mathrm{s}}\) denotes the number of common prototypes and d denotes the number of channels. Then, we can obtain the reconstructed prototypes \(\boldsymbol{H}_{\mathrm{r}}\in{\mathbb{R}^{C\times d}}\), where C represents the number of support images, by aggregating common knowledge based on cross-attention. The whole process can be formulated as follows:

where \(W_{\mathrm{q}}\) and \(W_{\mathrm{k}}\) are linear projections for H and S, respectively. S is the learnable common prototype.

After obtaining the reconstructed prototypes \(\boldsymbol{H}_{\mathrm{r}}\), we utilize the matching coefficient A in Eq. (2) to reconstruct the query feature \(\boldsymbol{F}_{\mathrm{r}}\) using Eq. (5):

Finally, the two types of reconstructed feature maps \(\boldsymbol{F}_{\mathrm{h}}'\) and \(\boldsymbol{F}_{\mathrm{r}}\) based on the original and reconstructed prototypes are fused together, producing the final human-guidance reconstructed query feature map \(\boldsymbol{F}_{\mathrm{h}}\in{\mathbb{R}^{HW \times d}}\), formulated as

where FFN denotes the feed-forward network.

Similarly, we can obtain the object-guidance reconstructed query feature \(\boldsymbol{F}_{\mathrm{o}}\in{\mathbb{R}^{HW\times d}}\), and the union-guidance reconstructed query feature \(\boldsymbol{F}_{\mathrm{u}}\in{\mathbb{R}^{HW\times d}}\) by following the aforementioned process.

At the same time, we use the matching coefficient A to combine three groups of distinct task position encodings \(\boldsymbol{T}_{\mathrm{pe}}\in{\mathbb{R}^{C\times d}}\), where subscript “pe” represents position encoding, on \(\boldsymbol{F}_{\mathrm{h}}\), \(\boldsymbol{F}_{\mathrm{o}}\), and \(\boldsymbol{F}_{\mathrm{u}}\), respectively, following Ref. [44]. This approach provides category discrimination information for the given C support categories, enabling class-agnostic classification.

Next, we apply three separate shared transformer encoders to \(\boldsymbol{F}_{\mathrm{h}}\), \(\boldsymbol{F}_{\mathrm{o}}\), and \(\boldsymbol{F}_{\mathrm{u}}\). This method separates the three encoding procedures from each other, producing the human-guided query encoder feature \(\boldsymbol{T}_{\mathrm{h}}\), the object-guided query encoder feature \(\boldsymbol{T}_{\mathrm{o}}\), and the union-guided query encoder feature \(\boldsymbol{T}_{\mathrm{u}}\).

4.4 Conditional uncoupling decoder

Based on the encoded features, a direct approach is to use a single decoder with HOI queries to perform HOI detection, followed by the DETR approach [6]. However, such a scheme may have two shortcomings. First, HOI triplets often contain three types of information, i.e., human and object instances and interactions, which are related to different regions of the images. This makes it challenging for a single decoder to comprehend. Second, the queries in those DETR-like detectors (i.e., Anchor-DETR [62], Conditional-DETR [63], and DAB-DETR [64]) usually leave semantic content embeddings blank and are updated during the decoding process, which usually leads to unstable matching with the ground truth instances and slow optimization. However, in our task, the HOI categories to be detected are directly provided by the support set, which can be better leveraged to boost the decoding process.

To address these issues, we develop a conditional uncoupling decoder. It separates the single decoder into three decoders for a clearer understanding of the HOI nature and includes visual guidance as prompts to introduce task-specific cues into learnable queries. The inclusion of guidance cues equips the queries with the ability to adapt quickly to unseen categories. As a result, the model’s generalization capability improves, allowing it to better handle open-set tasks.

Specifically, for the human and object decoders, we start by randomly initializing N human queries \(\boldsymbol{Q}_{\mathrm{h}}\in{\mathbb{R}^{N\times d}}\) and N object queries \(\boldsymbol{Q}_{\mathrm{o}}\in{\mathbb{R}^{N\times d}}\). Next, we use the human and object prototypes \(\boldsymbol{H},\boldsymbol{O}\in{\mathbb{R}^{C\times d}}\) as prompts. We concatenate them with the corresponding human and object queries to generate conditional human queries and conditional object queries, respectively. Note that a learnable position embedding matrix \(\boldsymbol{P}\in{\mathbb{R}^{(N+C)\times d}}\) is introduced to help the decoder identify the prompts.

Next, conditional human queries and conditional object queries are fed into the corresponding transformer decoder layers [63] to update themselves using cross-attention with the encoder feature \(\boldsymbol{T}_{\mathrm{h}}\) and \(\boldsymbol{T}_{\mathrm{o}}\), respectively. The above processes can be formulated as

where \([;]\) means concatenation, \(\text{Dec}_{\mathrm{h}}\) and \(\text{Dec}_{\mathrm{o}}\) represent the human decoder and object decoder, respectively. In our experiments, the parameters of the human and object decoders are shared.

Finally, each query i predicts human-object bounding-box pairs \((b^{\mathrm{h}}_{i}, b^{\mathrm{o}}_{i})\) and the object category score \(s^{\mathrm{o}}_{i}\in{\mathbb{R}^{C}}\) for the C support object categories. This is formulated as follows:

where FFN denotes a feed-forward network.

For the interaction decoder, we initially follow Ref. [30] to use the average of the intermediate output human queries \(\boldsymbol{Q}'_{\mathrm{h}}\) and object queries \(\boldsymbol{Q}'_{\mathrm{o}}\) of each instance decoder layer as the initialization of the interaction queries \(\boldsymbol{Q}_{\mathrm{v}}\in{\mathbb{R}^{N\times d}}\) for each interaction decoder layer, formulated as

Next, we adopt union prototypes \(\boldsymbol{U}\in{\mathbb{R}^{C\times d}}\) as prompts and concatenate them with \(\boldsymbol{Q}_{\mathrm{v}}\in{\mathbb{R}^{N\times d}}\), producing conditional interaction queries, which are further fed into transformer decoder layers to update themselves with the union-guided query encoder feature \(\boldsymbol{T}_{\mathrm{u}}\), formulated as

where \(\text{Dec}_{\mathrm{v}}\) represents an interaction decoder layer.

The output queries of the last layer are also fused with the average of instance queries to perform the decoding process with the encoder feature.

Finally, each of the interaction query i is used to predict the interaction score \(s^{\mathrm{v}}_{i}\in{\mathbb{R}^{C}}\) for the C support verb categories, formulated as

4.5 Training loss

We follow a previous transformer-based HOI method [7] to assign predictions for ground truth with the bipartite matching Hungarian algorithm.

The matching cost is set equal to the training loss. This loss comprises a box regression loss \(\mathcal{L}_{\mathrm{b}}\) and an IoU loss \(\mathcal{L}_{\mathrm{i}}\) for both the human and object. Additionally, it includes an object classification loss \(\mathcal{L}_{\mathrm{c}}^{\mathrm{o}}\), and a verb classification loss \(\mathcal{L}_{\mathrm{c}}^{\mathrm{v}}\). The final loss \(\mathcal{L}\) is the weighted sum of these losses, formulated as follows:

where \(\lambda _{\mathrm{b}}\), \(\lambda _{\mathrm{i}}\), \(\lambda _{\mathrm{c}}^{\mathrm{o}}\), and \(\lambda _{\mathrm{c}}^{\mathrm{v}}\) denote hyper-parameters for reweighting.

5 Experiments

5.1 Datasets

To support our proposed visual-guided HOI detection, we define a new benchmark dataset based on HICO-DET [2], containing a base set for training and four novel sets for evaluation, as discussed in Sect. 3.2. HICO-DET [2] is a large-scale and popular HOI detection benchmark dataset, which is extended from the existing HOI classification benchmark dataset HICO [65] with instance annotations. It contains 600 HOI categories composed of 80 object categories and 117 action categories, with 38,118 training images and 9,658 testing images, providing a solid basis for building our visual-guided HOI detection benchmark. The final split results of the HOI category are reported in Table 1.

5.2 Evaluation metric

Following recent work [2, 30], we adopt the mean average precision (mAP) for evaluation. A predicted HOI result can be assigned as a true positive if both the human and object bounding boxes have IoUs greater than 0.5 compared with a pair of ground truth bounding boxes, and if the predicted HOI category is accurate.

As discussed in Sect. 3.2, we split 600 HOI categories into a base set and four novel sets (i.e., UC, UO, UV, and UOUV). We conduct the model evaluation on the testing sets of these splits and also report the results on the full testing set (denoted as Full), which contains all HOI categories, for a comprehensive evaluation.

5.3 Implementation details

We adopt ResNet-50 [66] as our backbone, and each transformer encoder includes six encoder layers, and each decoder includes three decoder layers. Our model employs eight attention heads. We use the AdamW optimizer to train our model for 80 epochs. The initial learning rate is set to \(10^{\mathrm{-4}}\) and divided by 10 at the 40th epoch. The number of queries N is set to 64. We follow QPIC [7] to set the loss weights \(\lambda _{\mathrm{b}}\), \(\lambda _{\mathrm{i}}\), \(\lambda _{\mathrm{c}}^{\mathrm{o}}\), and \(\lambda _{\mathrm{c}}^{\mathrm{v}}\) to 2.5, 1, 1, and 1, respectively. Our model is implemented based on PyTorch and trained on eight NVIDIA 3090 GPUs with a batch size of 16.

5.4 Ablation study

In this section, we report ablation study results to verify the effectiveness of our model. The experimental results are reported in Table 2. The first line reports the performance of our baseline model, which directly removes the progressive guidance and query reconstruction module in our proposed model and uses a single decoder as a replacement for our proposed conditional uncoupling decoder.

Effectiveness of uncoupling decoder

Based on the baseline model, we replace its single decoder with our proposed uncoupling decoder, which includes a human decoder, an object decoder, and an interaction decoder. From Table 2, we can see that using our uncoupling decoder improves all model performance compared with the baseline model, which demonstrates the effectiveness of using different decoders for different tasks.

Effectiveness of conditional uncoupling decoder

We introduce the conditional query design into our model to provide task-specific knowledge. The experimental results in Table 2 demonstrate that introducing this design can further improve performance, particularly for the base, UC, UO, and UV sets. This demonstrates the benefits of providing guidance for decoding learning.

Effectiveness of visual-guided query reconstruction

Next, we use the visual-guided query reconstruction design to introduce learnable common HOI knowledge. As shown in Table 2, using common prototypes to reconstruct the guidance features can significantly improve performance on nearly all base and novel sets, thus verifying their effectiveness.

5.5 Setup analysis

We analyze our proposed visual-guided HOI detection setups on our newly constructed benchmark dataset to reveal some key findings for this new task, including both support sampling strategies and evaluation settings.

Different sampling strategies

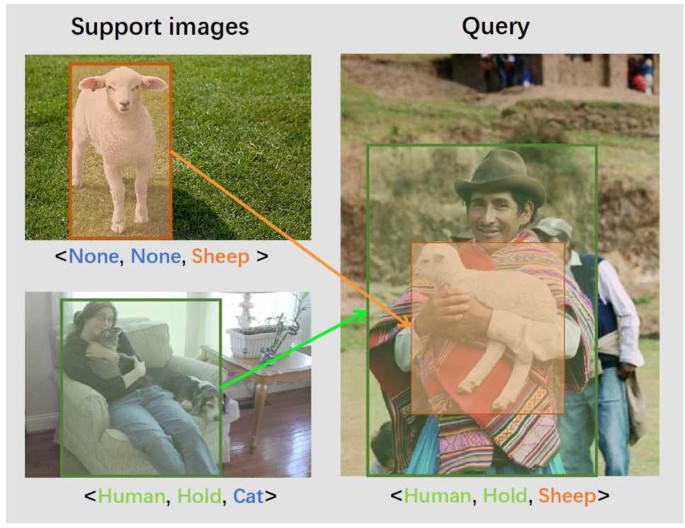

For guidance sampler, a straightforward way is to sample support images that include the same HOI triplet as in the query image, which we refer to as “composition guidance”. However, such a strategy did not consider the compositional nature of HOI and cannot handle the situation when the target object and target verb occur in different support images, denoted as “disentangled guidance”. We argue that such a setup is more meaningful for two reasons. First, it mimics the human ability to learn HOI relations in a disentangled way. For example, as shown in Fig. 3, even a baby can easily recognize an HOI relationship between a human and a sheep after seeing a human holding a cat and then a sheep. Such an ability plays a very important role in our real life. Second, it does not require the support image to contain the same HOI category, making it more practical for real-world scenarios.

Real-world scenario of visual-guided HOI detection in a disentangled way

To this end, we explore a new sampling strategy for “disentangled guidance”, which involves two semi-positive samples: one with a positive object and a negative verb, and the other with a negative object and a positive verb.

Discussion on different sampling strategies

We discuss the effects of using the proposed two support sampling strategies, i.e., composition guidance (CG) and disentangled guidance (DG), in training and evaluation settings. The proposed model is used to report the experimental results of using CG and DG as the training and evaluation setups, respectively. Table 3 shows the results.

First, no matter whether training with CG or DG, we find that using the CG evaluation can achieve much better performance compared with using DG. This is because CG samples can provide stronger HOI information guidance and require no disentanglement learning capability. Thus, CG makes the task much easier than providing verb and object guidance information in different instances, as in DG. However, in real-world settings, providing CG samples directly might be difficult or even impossible, making it less practical. Instead, DG samples are much easier to acquire.

Second, we observe that training with the same evaluation setup always leads to better performance than training with a different setup. This is easy to understand since training and testing with different setups means the model faces the out-of-distribution problem and hence is less effective. However, we find that training with DG and evaluation with CG achieves acceptable performance, while training with CG and evaluation with DG leads to much worse performance. This is because training with CG does not encourage disentanglement learning capability for the model from the very beginning. Even though our proposed model has explicit disentangled prototype and decoder designs, the training process forces it to work in a compositional way instead of a disentangled way, causing it to lose the ability to generalize to DG samples. In contrast, training with DG makes our model perform HOI detection in a disentangled way, which can also work for CG samples and lead to acceptable performance. Hence, we consider DG to be a more generalizable training setup, and we hope it will inspire more research in the future.

5.6 Discussion on different training sampling strategies

As discussed above, we use composition guidance (CG) and disentangled guidance (DG) for evaluation. CG represents sampling one positive composition guidance, and the remaining are all negative.

On the other hand, DG entails selecting two semi-positive samples, where one consists of a positive object and a negative verb, while the other features a positive verb and a negative object. The remaining samples are designated as negative.

Given that CG samples offer more explicit HOI information and do not require disentanglement learning, they inherently make the task easier compared to DG, where verb and object guidance information is provided separately in different support samples. This prompts an important question: Can the inclusion of CG samples in the training process benefit the hard task, i.e., DG setup?

A straightforward method involves collecting CG and DG samples randomly according to specific proportions. We conducted experiments using two random strategies: one with a 50% probability for both CG and DG samples (referred to as “R1”), and the other with 20% for CG and 80% for DG (referred to as “R2”). In addition, inspired by the idea that learning easier tasks first and then moving on to harder tasks can promote the learning process, as indicated by curriculum learning, we further explore two strategies: one using CG samples for the initial 25 epochs and DG samples for the subsequent 25 epochs (referred to as “CL1”), and the other using CG samples for the initial 10 epochs and DG samples for the following 40 epochs (referred to as “CL2”). We report the experimental results in Table 4 on the DG evaluation setup. As shown in Table 4, using random strategies (i.e., “R1” and “R2”) achieves lower performance than using only DG samples for training.

In contrast, the curriculum learning-based strategies (“CL1” and “CL2”) show improvements in performance on novel sets, demonstrating the effectiveness of introducing CG samples for the DG evaluation task based on curriculum learning. Of the results, “CL1” achieves the best performance and can therefore be considered a valuable training strategy.

5.7 Comparison with state-of-the-art methods

We compare our proposed method with some state-of-the-art HOI detection methods, i.e., THID [32] and GEN-VLKT [30]. To make a fair comparison, we retrain these models on our proposed base set using their default settings. The experimental results are shown in Table 5. It shows that our proposed method is superior to other methods, although all of the models leveraged the powerful CLIP model [20] trained with very large-scale dataset. This indicates that providing visual guidance is an alternative and feasible way to implement the generalization ability of HOI models.

6 Qualitative results

We show qualitative results of our model using composition guidance and disentangled guidance in Fig. 4 and Fig. 5, respectively. It can be observed that our proposed VG-HOI can accurately detect interactive human and object pairs. The detection results were effective in the simple scenario involving a few humans and objects. The novel samples can be detected with only one shot of visual guidance instead of retraining.

Visualization of the detection results obtained from our proposed VG-HOI model using composition guidance. The upper row shows our model predictions. Interactive human and object pairs are highlighted by boxes of the same color. The bottom row is the corresponding composition guidance, where the blue and orange boxes indicate humans and objects, respectively

Visualization of the detection results obtained from our proposed VG-HOI model using disentangled guidance. Predictions showing interactive human and object pairs are highlighted by boxes of the same color

7 Conclusion

In this paper, we introduce the first visual-guided HOI (VG-HOI) detection task for detecting unseen HOI categories using a few guidance images. To support our task, we construct a new VG-HOI detection benchmark dataset that includes a base set and four novel sets for comprehensive evaluation. Then, we propose our VG-HOI model with progressive guidance and query reconstruction and the conditional uncoupling decoder via incorporating common HOI knowledge and task-specific cues for improving the model’s generalization ability. Moreover, we explore a more practical way of sampling guidance, i.e., disentangled guidance. Experimental results verify the effectiveness of our model and suggest that providing visual guidance is a feasible approach to open-world HOI detection.

Data availability

The HICO-DET dataset images used in this study are publicly available at https://drive.google.com/open?id=1QZcJmGVlF9f4h-XLWe9Gkmnmj2z1gSnk. The dataset split files for our proposed visual-guided HOI detection benchmark are publicly accessible at https://github.com/nanfangAlan/VGHOI.

Abbreviations

- CUD:

-

conditional uncoupling decoder

- DG:

-

disentangled guidance

- FFN:

-

feed-forward network

- HOI:

-

human-object interaction

- mAP:

-

mean average precision

- ROIAlign:

-

region of interest alignment

- UC:

-

unseen composition

- UO:

-

unseen object

- UV:

-

unseen verb

- UOUV:

-

unseen object and verb

- VG-HOI:

-

visual-guided human-object interaction

- VGQR:

-

visual-guided query reconstruction

- VLM:

-

vision-language model

References

Gkioxari, G., Girshick, R., Dollár, P., & He, K. (2018). Detecting and recognizing human-object interactions. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 8359–8367). Piscataway: IEEE.

Chao, Y.-W., Liu, Y., Liu, X., Zeng, H., & Deng, J. (2018). Learning to detect human-object interactions. In Proceedings of the IEEE winter conference on applications of computer vision (pp. 381–389). Piscataway: IEEE.

Zhang, F., Campbell, D., & Gould, S. (2022). Efficient two-stage detection of human-object interactions with a novel unary-pairwise transformer. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 20104–20112). Piscataway: IEEE.

Yang, D., Zou, Y., Zhang, C., Cao, M., & Chen, J. (2021). RR-Net: relation reasoning for end-to-end human-object interaction detection. IEEE Transactions on Circuits and Systems for Video Technology, 32, 3853–3865.

Liao, Y., Liu, S., Wang, F., Chen, Y., Qian, C., & Feng, J. (2020). PPDM: parallel point detection and matching for real-time human-object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 482–490). Piscataway: IEEE.

Carion, N., Massa, F., Synnaeve, G., Usunier, N., Kirillov, A., & Zagoruyko, S. (2020). End-to-end object detection with transformers. In A. Vedaldi, H. Bischof, T. Brox, & J. Frahm (Eds.), Proceedings of the 16th European conference on computer vision (pp. 213–229). Cham: Springer.

Tamura, M., Ohashi, H., & Yoshinaga, T. (2021). QPIC: query-based pairwise human-object interaction detection with image-wide contextual information. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 10410–10419). Piscataway: IEEE.

Zou, C., Wang, B., Hu, Y., Liu, J., Wu, Q., Zhao, Y., Li, B., Zhang, C., Zhang, C., Wei, Y., et al. (2021). End-to-end human object interaction detection with HOI transformer. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 11825–11834). Piscataway: IEEE.

Chen, M., Liao, Y., Liu, S., Chen, Z., Wang, F., & Qian, C. (2021). Reformulating HOI detection as adaptive set prediction. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 9004–9013). Piscataway: IEEE.

Kim, B., Lee, J., Kang, J., Kim, E.-S., & Kim, H. (2021). HOTR: end-to-end human-object interaction detection with transformers. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 74–83). Piscataway: IEEE.

Chen, J., & Yanai, K. (2023). QAHOI: query-based anchors for human-object interaction detection. In Proceedings of the 18th international conference on machine vision and applications. (pp. 1–5). Piscataway: IEEE.

Cheng, Y., Wang, Z., Zhan, W., & Duan, H. (2022). Multi-scale human-object interaction detector. IEEE Transactions on Circuits and Systems for Video Technology, 33, 1827–1838.

Tu, D., Min, X., Duan, H., Guo, G., Zhai, G., & Shen, W. (2022). Iwin: human-object interaction detection via transformer with irregular windows. In S. Avidan, G. J. Brostow, M. Cissé, G. M. Farinella, & T. Hassner (Eds.), Proceedings of the 17th European conference on computer vision (pp. 87–103). Cham: Springer.

Park, J., Lee, S., Heo, H., Choi, H., & Kim, H. (2022). Consistency learning via decoding path augmentation for transformers in human object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 1019–1028). Piscataway: IEEE.

Kim, S., Jung, D., & Cho, M. (2023). Relational context learning for human-object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 2925–2934). Piscataway: IEEE.

Wang, Y., Liu, Q., & Lei, Y. (2024). TED-Net: dispersal attention for perceiving interaction region in indirectly-contact HOI detection. IEEE Transactions on Circuits and Systems for Video Technology, 34, 5603–5615.

Ren, W., Luo, J., Jiang, W., Qu, L., Han, Z., Tian, J., & Liu, H. (2024). Learning self- and cross-triplet context clues for human-object interaction detection. IEEE Transactions on Circuits and Systems for Video Technology, 34, 9760–9773.

Jia, M., Zhao, L., Li, G., & Zheng, Y. (2025). ContextHOI: spatial context learning for human-object interaction detection. In T. Walsh, J. Shah, & Z. Kolter (Eds.), Proceedings of the 39th AAAI conference on artificial intelligence (pp. 3931–3939). Palo Alto: AAAI Press.

Hu, Y., Ding, C., Sun, C., Huang, S., & Xu, X. (2025). Bilateral collaboration with large vision-language models for open vocabulary human-object interaction detection. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 20126–20136). Piscataway: IEEE.

Radford, A., Kim, J., Hallacy, C., Ramesh, A., Goh, G., Agarwal, S., Sastry, G., Askell, A., Mishkin, P., Clark, J., et al. (2025). Learning transferable visual models from natural language supervision. In M. Meila, & T. Zhang (Eds.), Proceedings of the international conference on machine learning (pp. 8748–8763). Retrieved November 25, 2025, from https://proceedings.mlr.press/v139/radford21a/radford21a.pdf.

Wang, X., Zhang, X., Cao, Y., Wang, W., Shen, C., & Huang, T. (2023). SegGPT: towards segmenting everything in context. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 1130–1140). Piscataway: IEEE.

Wang, X., Wang, W., Cao, Y., Shen, C., & Huang, T. (2023). Images speak in images: a generalist painter for in-context visual learning. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 6830–6839). Piscataway: IEEE.

Ren, S., He, K., Girshick, R., & Sun, J. (2015). Faster R-CNN: towards real-time object detection with region proposal networks. In C. Cortes, N. D. Lawrence, D. D. Lee, M. Sugiyama, & R. Garnett (Eds.), Proceedings of the 29th international conference on neural information processing systems (pp. 91–99). Red Hook: Curran Associates.

Zhang, A., Liao, Y., Liu, S., Lu, M., Wang, Y., Gao, C., & Li, X. (2021). Mining the benefits of two-stage and one-stage HOI detection. In M. Ranzato, A. Beygelzimer, Y. N. Dauphin, P. Liang, & J. W. Vaughan (Eds.), Proceedings of the 35th international conference on neural information processing systems (pp. 17209–17220). Red Hook: Curran Associates.

Zhou, D., Liu, Z., Wang, J., Wang, L., Hu, T., Ding, E., & Wang, J. (2022). Human-object interaction detection via disentangled transformer. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 19568–19577). Piscataway: IEEE.

Zhang, Y., Pan, Y., Yao, T., Huang, R., Mei, T., & Chen, C.-W. (2022). Exploring structure-aware transformer over interaction proposals for human-object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 19548–19557). Piscataway: IEEE.

Dong, L., Li, Z., Xu, K., Zhang, Z., Yan, L., Zhong, S., & Zou, X. (2022). Category-aware transformer network for better human-object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 19538–19547). Piscataway: IEEE.

Iftekhar, A., Chen, H., Kundu, K., Li, X., Tighe, J., & Modolo, D. (2022). What to look at and where: semantic and spatial refined transformer for detecting human-object interactions. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 5353–5363). Piscataway: IEEE.

Qu, X., Ding, C., Li, X., Zhong, X., & Tao, D. (2022). Distillation using oracle queries for transformer-based human-object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 19558–19567). Piscataway: IEEE.

Liao, Y., Zhang, A., Lu, M., Wang, Y., Li, X., & Liu, S. (2022). GEN-VLKT: simplify association and enhance interaction understanding for HOI detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 20123–20132). Piscataway: IEEE.

Kim, B., Mun, J., On, K.-W., Shin, M., Lee, J., & Kim, E.-S. (2022). MSTR: multi-scale transformer for end-to-end human-object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 19578–19587). Piscataway: IEEE.

Wang, S., Duan, Y., Ding, H., Tan, Y.-P., Yap, K.-H., & Yuan, J. (2022). Learning transferable human-object interaction detector with natural language supervision. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 939–948). Piscataway: IEEE.

Ning, S., Qiu, L., Liu, Y., & He, X. (2023). HOICLIP: efficient knowledge transfer for HOI detection with vision-language models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 23507–23517). Piscataway: IEEE.

Luo, J., Ren, W., Jiang, W., Chen, X., Wang, Q., Han, Z., & Liu, H. (2024). Discovering syntactic interaction clues for human-object interaction detection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 28212–28222). Piscataway: IEEE.

Cao, Y., Tang, Q., Yang, F., Su, X., You, S., Lu, X., & Xu, C. (2023). Re-mine, learn and reason: exploring the cross-modal semantic correlations for language-guided HOI detection. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 23492–23503). Piscataway: IEEE.

Lei, T., Caba, F., Chen, Q., Jin, H., Peng, Y., & Liu, Y. (2023). Efficient adaptive human-object interaction detection with concept-guided memory. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 6480–6490). Piscataway: IEEE.

Mao, Y., Deng, J., Zhou, W., Li, L., Fang, Y., & Li, H. (2023). CLIP4HOI: towards adapting clip for practical zero-shot HOI detection. In A. Oh, T. Naumann, A. Globerson, K. Saenko, M. Hardt, & S. Levine (Eds.), Proceedings of the 37th international conference on neural information processing systems (pp. 45895–45906). Red Hook: Curran Associates.

Wang, G., Guo, Y., Xu, Z., & Kankanhalli, M. (2024). Bilateral adaptation for human-object interaction detection with occlusion-robustness. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 27970–27980). Piscataway: IEEE.

Yuan, H., Jiang, J., Albanie, S., Feng, T., Huang, Z., Ni, D., & Tang, M. (2022). RLIP: relational language-image pre-training for human-object interaction detection. In S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, & A. Oh (Eds.), Proceedings of the 36th international conference on neural information processing systems (pp. 37416–37431). Red Hook: Curran Associates.

Yuan, H., Zhang, S., Wang, X., Albanie, S., Pan, Y., Feng, T., Jiang, J., Ni, D., Zhang, Y., & Zhao, D. (2023). RLIPv2: fast scaling of relational language-image pre-training. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 21649–21661). Piscataway: IEEE.

Zheng, S., Xu, B., & Jin, Q. (2023). Open-category human-object interaction pre-training via language modeling framework. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 19392–19402). Piscataway: IEEE.

Kang, B., Liu, Z., Wang, X., Yu, F., Feng, J., & Darrell, T. (2019). Few-shot object detection via feature reweighting. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 8420–8429). Piscataway: IEEE.

Lu, X., Diao, W., Mao, Y., Li, J., Wang, P., Sun, X., & Fu, K. (2023). Breaking immutable: information-coupled prototype elaboration for few-shot object detection. In B. Williams, Y. Chen, & J. Neville (Eds.), Proceedings of the 37th AAAI conference on artificial intelligence (pp. 1844–1852). Palo Alto: AAAI Press.

Zhang, G., Luo, Z., Cui, K., Lu, S., & Xing, E. (2022). Meta-DETR: image-level few-shot detection with inter-class correlation exploitation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 45, 12832–12843.

Bulat, A., Guerrero, R., Martinez, B., & Tzimiropoulos, G. (2023). FS-DETR: few-shot DEtection TRansformer with prompting and without re-training. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 11793–11802). Piscataway: IEEE.

Han, Y., Zhang, J., Xue, Z., Xu, C., Shen, X., Wang, Y., Wang, C., Liu, Y., & Li, X. (2024). Reference twice: a simple and unified baseline for few-shot instance segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 46, 9221–9238.

Tian, Z., Zhao, H., Shu, M., Yang, Z., Li, R., & Jia, J. (2020). Prior guided feature enrichment network for few-shot segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 44, 1050–1065.

Sun, Y., Chen, Q., He, X., Wang, J., Feng, H., Han, J., Ding, E., Cheng, J., Li, Z., & Wang, J. (2022). Singular value fine-tuning: few-shot segmentation requires few-parameters fine-tuning. In S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, & A. Oh (Eds.), Proceedings of the 36th international conference on neural information processing systems (pp. 37484–37496). Red Hook: Curran Associates.

Zhang, R., Jiang, Z., Guo, Z., Yan, S., Pan, J., Ma, X., Dong, H., Gao, P., & Li, H. (2023). Personalize segment anything model with one shot (pp. 13754–13783). arXiv preprint. arXiv:2305.03048.

Kirillov, A., Mintun, E., Ravi, N., Mao, H., Rolland, C., Gustafson, L., Xiao, T., Whitehead, S., Berg, A., Lo, W.-Y., et al. (2023). Segment anything. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 4015–4026). Piscataway: IEEE.

Sun, Y., Chen, J., Zhang, S., Zhang, X., Chen, Q., Zhang, G., Ding, E., Wang, J., & Li, Z. (2024). VRP-SAM: SAM with visual reference prompt. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 23565–23574). Piscataway: IEEE.

Liu, Y., Liu, N., Yao, X., & Han, J. (2022). Intermediate prototype mining transformer for few-shot semantic segmentation. In Proceedings of the 36th international conference on neural information processing systems (pp. 38020–38031). Red Hook: Curran Associates.

Liu, Y., Liu, N., Cao, Q., Yao, X., Han, J., & Shao, L. (2022). Learning non-target knowledge for few-shot semantic segmentation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 11573–11582). Piscataway: IEEE.

Shaban, A., Bansal, S., Liu, Z., Essa, I., & Boots, B. (2017). One-shot learning for semantic segmentation. arXiv preprint. arXiv:1709.03410.

Ji, Z., Liu, X., Pang, Y., & Li, X. (2020). SGAP-Net: semantic-guided attentive prototypes network for few-shot human-object interaction recognition. In Proceedings of the 34th AAAI conference on artificial intelligence (pp. 11085–11092). Palo Alto: AAAI Press.

Ji, Z., Liu, X., Pang, Y., Ouyang, W., & Li, X. (2020). Few-shot human-object interaction recognition with semantic-guided attentive prototypes network. IEEE Transactions on Image Processing, 30, 1648–1661.

Bansal, A., Rambhatla, S., Shrivastava, A., & Chellappa, R. (2020). Detecting human-object interactions via functional generalization. In Proceedings of the 34th AAAI conference on artificial intelligence (pp. 10460–10469). Palo Alto: AAAI Press.

Liu, Y., Yuan, J., & Chen, C. (2020). ConsNet: learning consistency graph for zero-shot human-object interaction detection. In C. Chen, R. Cucchiara, X.-S. Hua, G.-J. Qi, E. Ricci, Z. Zhang, & R. Zimmermann (Eds.), Proceedings of the 28th ACM international conference on multimedia (pp. 4235–4243). New York: ACM.

Hou, Z., Peng, X., Qiao, Y., & Tao, D. (2020). Visual compositional learning for human-object interaction detection. In C. W. Chen, R. Cucchiara, X. Hua, G. Qi, E. Ricci, Z. Zhang, & R. Zimmermann (Eds.), Proceedings of the 16th European conference on computer vision (pp. 584–600). Cham: Springer.

He, K., Gkioxari, G., Dollár, P., & Girshick, R. (2017). Mask R-CNN. In Proceedings of the IEEE international conference on computer vision (pp. 2961–2969). Piscataway: IEEE.

Doersch, C., Gupta, A., & Zisserman, A. (2020). CrossTransformers: spatially-aware few-shot transfer. In H. Larochelle, M. Ranzato, R. Hadsell, M. Balcan, & H. Lin (Eds.), Proceedings of the 34th international conference on neural information processing systems (pp. 21981–21993). Red Hook: Curran Associates.

Wang, Y., Zhang, X., Yang, T., & Sun, J. (2022). Anchor DETR: query design for transformer-based detector. In Proceedings of the 36th AAAI conference on artificial intelligence (pp. 2567–2575). Palo Alto: AAAI Press.

Meng, D., Chen, X., Fan, Z., Zeng, G., Li, H., Yuan, Y., Sun, L., & Wang, J. (2021). Conditional DETR for fast training convergence. In Proceedings of the IEEE international conference on computer vision (pp. 3651–3660). Piscataway: IEEE.

Liu, S., Li, F., Zhang, H., Yang, X., Qi, X., Su, H., Zhu, J., & Zhang, L. (2022). DAB-DETR: dynamic anchor boxes are better queries for DETR. arXiv preprint. arXiv:2201.12329.

Chao, Y.-W., Wang, Z., He, Y., Wang, J., & Deng, J. (2015). HICO: a benchmark for recognizing human-object interactions in images. In Proceedings of the IEEE international conference on computer vision (pp. 1017–1025). Piscataway: IEEE.

He, K., Zhang, X., Ren, S., & Sun, J. (2016). Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 770–778). Piscataway: IEEE.

Funding

This work was supported by the National Natural Science Foundation of China (No. 62401447) and the Key Research and Development Program of Shaanxi (Nos. 2024GX-YBXM-051 and 2024CY2-GJHX08).

Author information

Authors and Affiliations

Contributions

All of the authors contributed to the study conception and design. Conceptualization of this study, methodology, writing: FN, NZ, and NL; Investigation, validation: FN, NL, and HH; Funding acquisition, Supervision: BW. All the authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors have no competing interests to declare relevant to this article’s content.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Nan, F., Zhang, N., Liu, N. et al. Visual-guided human-object interaction detection. Vis. Intell. 3, 30 (2025). https://doi.org/10.1007/s44267-025-00102-0

Received:

Revised:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1007/s44267-025-00102-0